A Holistic QA & Testing Strategy: 25 Critical Components of a Testing Strategy

In modern software development and service delivery, a reactive “test-last” approach is no longer sufficient. A robust QA strategy is proactive, data-driven, and integrated into the entire lifecycle. Below is a structured framework covering 25 Critical Components of a Testing Strategy and essential pillars—from foundational definitions to advanced analytics and operational protocols.

Table of Contents

1. Defining Use Case

A use case describes how an end-user interacts with the system to achieve a goal. It bridges business requirements and technical implementation. Without clearly defined use cases, testing lacks context, leading to irrelevant test scenarios and missed real-world workflows.

2. Defining Test Cases

Test cases are step-by-step instructions to validate specific functionality against expected results. They ensure repeatability, traceability to requirements, and coverage. Well-documented test cases enable efficient regression testing and onboarding of new team members.

3. Environment Readiness

The testing environment must mirror production in configuration, data, and dependencies. Validating readiness prevents false positives/negatives caused by environmental issues (e.g., wrong versions, stale data). This includes verifying network access, test data seeding, and service mock availability.

4. Testers’ Profiles

Define the skills, experience level, and domain knowledge required for each testing role (e.g., automation engineer, security tester, UAT lead). Matching profiles to complexity ensures efficiency. For instance, exploratory testing needs creative testers, while compliance testing requires meticulous, rule-driven minds.

5. Entry Criteria

A checklist of conditions that must be met before testing begins (e.g., build deployed, smoke tests passed, test data ready). This gates poor-quality builds from entering the testing phase, saving effort and reducing defect churn. It enforces discipline among development teams.

6. Exit Criteria

Conditions that formally allow testing to conclude (e.g., 95% test case pass rate, no Critical/High open bugs, code coverage thresholds met). Exit criteria prevent premature sign-off and provide an objective measure of release readiness, reducing arguments over subjective “feeling of quality.”

7. Measuring KPIs (Key Performance Indicators)

Quantitative metrics such as Defect Density, Test Execution Velocity, Mean Time to Detect (MTTD), and Test Case Pass % over time. KPIs offer visibility into process health, help forecast release timelines, and highlight improvement areas. Without KPIs, quality becomes a guess.

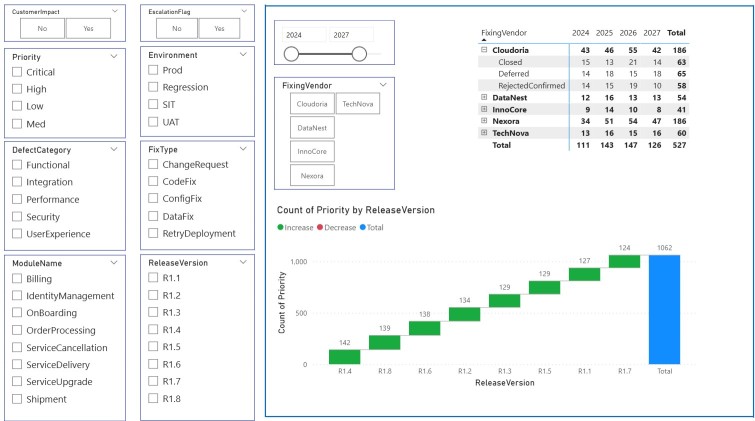

8. Analyzing Root Cause

When a defect escapes to production, root cause analysis (RCA) investigates why it happened—not just the technical error but process gaps (e.g., missing test type, unclear requirement). RCA turns failures into systemic improvements, reducing recurrence by 40-60% over time. IT Defects Management Dashboard Power BI Template Stunning Visuals – Exceediance

9. Trend Analysis

Tracking metrics (defect arrival rate, test pass rate, regression failures) across sprints or releases reveals patterns. Increasing defect trend mid-sprint may indicate technical debt; decreasing test execution rate may signal environment instability. Trend analysis enables predictive quality management, not just historical reporting. Check out our dedicated section on how to create effective Business Intelligence Tools Using Power-BI Power BI – Exceediance

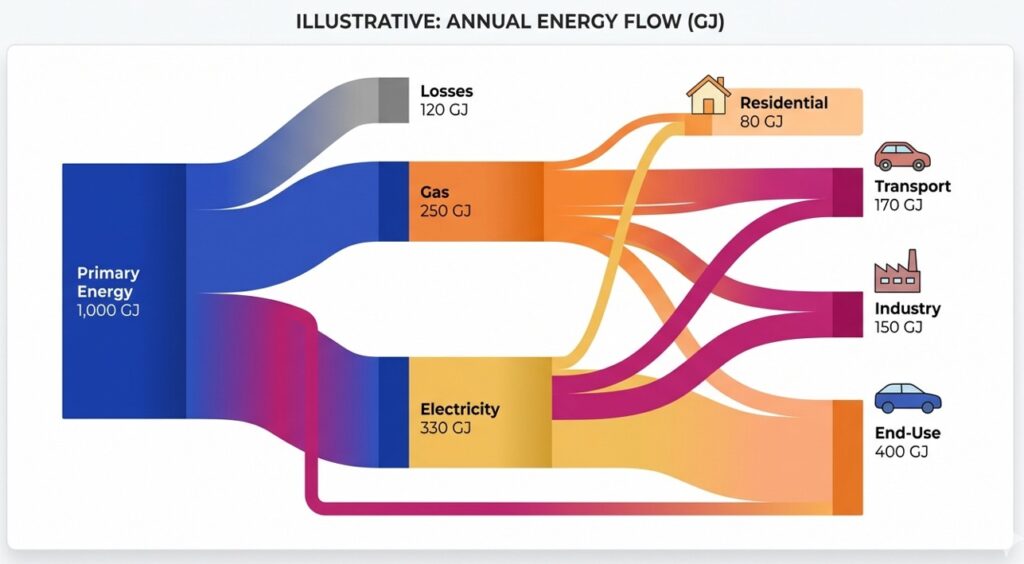

10. Sankey Diagrams

A Sankey diagram visualizes flow—e.g., defect states (New → Triaged → In Progress → Verified → Closed) or test case results (Pass → Fail → Blocked → Rerun). The thickness of arrows highlights bottlenecks. This helps teams quickly identify where work accumulates (e.g., too many defects stuck in “Reopen”) without wading through raw data.

11. Defining RASCI Matrix

RASCI clarifies roles: Responsible (does the work), Accountable (owns outcome), Supportive, Consulted, and Informed. For testing, who creates test cases, who approves entry criteria, who fixes environment issues? A RASCI matrix ends the “I thought you were doing it” confusion, accelerating decision-making.

12. Defining SLAs (Service Level Agreements)

SLAs in testing might include “95% of Critical defects triaged within 4 hours” or “Test environment available 99% during business hours.” SLAs set customer expectations for responsiveness and availability. They also provide a contractual backbone for incident handling in outsourced or cross-team testing.

13. Jira Reporting SOPs (Standard Operating Procedures)

A documented SOP for how to log defects, update statuses, link test cases, and generate dashboards in Jira. Without this, reporting becomes inconsistent—e.g., “Closed” meaning different things across teams. SOPs ensure that KPIs, trend data, and release reports are accurate and comparable. Effective Project Management Using Confluence and Jira – Exceediance

14. Strong Release Management for Deployments on Lower Environments

Release management governs how code moves from Dev → QA → Staging → UAT. It includes version control, deployment windows, rollback plans, and smoke tests post-deployment. Weak release management causes “environment drift” and wasted test cycles due to untested or mismatched builds.

15. SWAT Teams Setup

A SWAT team is a small, cross-functional group (dev, test, ops) ready to resolve critical defects or environment outages without standard escalation delays. They operate with urgency and authority. SWAT teams prevent a single blocker from halting the entire test phase for days.

16. Defects Triage Protocols

Triage is a recurring meeting to review new defects: assign severity/priority, determine ownership, and decide fix vs. defer. Without triage, defects linger, critical ones get ignored, and testers keep retesting fixed issues. A clear triage protocol (e.g., daily 15-minute standup) maintains defect queue hygiene.

17. Connectivity

Testing often depends on integrations (APIs, databases, message queues, third-party services). A connectivity strategy includes service virtualization, mock endpoints, and fallback authentication. Unplanned connectivity failures waste tester time and produce false failures. Proactive monitoring of connectivity is mandatory.

18. Security Matrix

A mapping of security requirements to test types (e.g., authentication → API negative tests, authorization → role-based UI tests, data encryption → penetration tests). The security matrix ensures no security control goes untested. In modern DevOps, security testing must be continuous, not an annual event.

19. Sign-Off

A formal approval (usually by QA Lead, Product Owner, and Ops) that all exit criteria are met and risks are communicated. Sign-off is not a rubber stamp; it includes a residual risk statement. It creates legal and business accountability, preventing releases from happening without a quality checkpoint.

20. Test Data Management

The process of provisioning realistic, compliant, and version-controlled data for testing. Poor test data leads to false positives and privacy breaches (e.g., using production customer data). A strong strategy includes data subsetting, synthetic data generation, and masking for GDPR/HIPAA.

21. Continuous Feedback Loop

A mechanism for testers to report process pain points (e.g., flaky tests, unclear requirements) directly to the team retro. This loop shortens the time from discovery of a process inefficiency to its resolution. Without it, the same inefficiencies degrade morale and delay releases indefinitely.

22. Test Automation Strategy

Not everything should be automated. Define what will be automated (regression, smoke, data-driven tests) vs. manual (exploratory, usability). The strategy includes framework choice, maintenance plan, and thresholds for flaky tests. Automation without strategy often becomes a costly, abandoned suite.

23. Non-Functional Testing Plan

Coverage for performance, scalability, reliability, and usability. Functional testing alone misses that an app may “work” but crashes under 100 concurrent users. The plan defines tools, realistic load models, and acceptable thresholds (e.g., response time < 300 ms).

24. Defect Prevention Techniques

Activities like root cause analysis of escaped defects, code review checklists for common bug types, and test-driven development (TDD). Prevention is cheaper than detection. Over time, this reduces the volume of defects entering the test phase.

25. Regression Test Selection

Given limited time, not all test cases can rerun. Use risk-based selection: changes in core modules, areas with recent defects, or high business impact. Without selection, regression suites grow exponentially, slowing releases. With it, you maximize coverage in minimal time.

Conclusion

A mature QA and testing strategy is not a checklist—it is an adaptive system. The 25 components above, from Use Cases to Regression Selection, work together to shift quality left, eliminate waste, and make release decisions data-driven. Start by assessing which of these points your team lacks, then implement them incrementally. The result: fewer production escapes, faster test cycles, and a culture where quality is everyone’s responsibility.

1 comment

[…] 25 Critical Components of a Testing Strategy – Exceediance […]

Comments are closed.